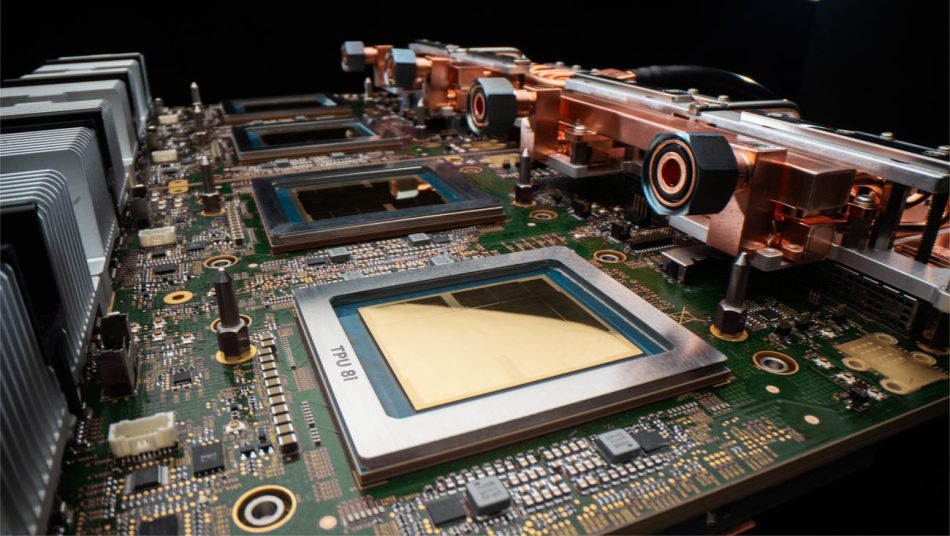

Our eighth generation TPUs: two chips for the agentic era

Google is proud to announce the launch of its eighth generation of Tensor Processing Units (TPUs), which includes two specialized chips: the TPU 8t and the TPU 8i. These chips are custom-engineered to meet the demands of the next generation of supercomputing, focusing on efficiency and scale.

Overview of TPU 8t and TPU 8i

The TPU 8t is designed as a training powerhouse, specifically built to accelerate the development of complex AI models. In contrast, the TPU 8i specializes in high-speed inference, enabling low-latency responses for collaborative AI agents. Together, these chips aim to enhance the performance and energy efficiency of AI workloads.

Key Features of the Eighth Generation TPUs

- TPU 8t: A robust training chip that significantly speeds up the development of massive AI models.

- TPU 8i: An inference chip designed for low-latency processing to support fast AI interactions.

- Custom Hardware: Both chips utilize custom hardware to deliver improved performance and energy efficiency compared to previous generations.

- Scalability: These new systems are designed to scale with your AI workloads, accommodating the growing demands of AI applications.

The Evolution of TPUs

The introduction of TPU 8t and TPU 8i marks the culmination of over a decade of development in AI chip technology. Google has consistently pushed the boundaries of machine learning (ML) supercomputing, and these new chips are no exception. They are designed to meet the evolving needs of AI models that require reasoning, multi-step workflows, and continuous learning from their actions.

Collaboration with Google DeepMind

In partnership with Google DeepMind, the TPU 8t and TPU 8i were developed to handle the most demanding AI workloads. This collaboration has allowed Google to leverage insights from both hardware and software development, ensuring that the chips are optimized for the latest model architectures.

Performance and Efficiency

The key insight behind the original TPU design remains relevant today: by customizing and co-designing silicon with hardware, networking, and software, Google can deliver significantly greater power efficiency and performance. This approach has set the standard for various ML supercomputing components, including:

- Custom numerics

- Liquid cooling systems

- Custom interconnects

Real-World Applications

Pioneering organizations are already utilizing TPUs to push the boundaries of what’s possible in AI. For example, Citadel Securities has chosen TPUs to power their cutting-edge AI workloads, demonstrating the chips’ capabilities in real-world applications.

Anticipating Future Demands

Hardware development cycles are inherently longer than software cycles, which means that Google must anticipate future technologies and demands when bringing new TPUs to market. The eighth generation TPUs were designed with an understanding of the rising demand for inference from customers as frontier AI models are deployed in production environments.

Conclusion

The introduction of the TPU 8t and TPU 8i represents a significant advancement in AI chip technology, designed to meet the challenges of the agentic era. With enhanced performance, energy efficiency, and scalability, these chips are set to empower developers and organizations to build smarter AI tools that can reason, solve problems, and learn effectively.

Note: The information provided in this article reflects Google’s announcements and insights regarding its eighth generation TPUs as of October 2023.