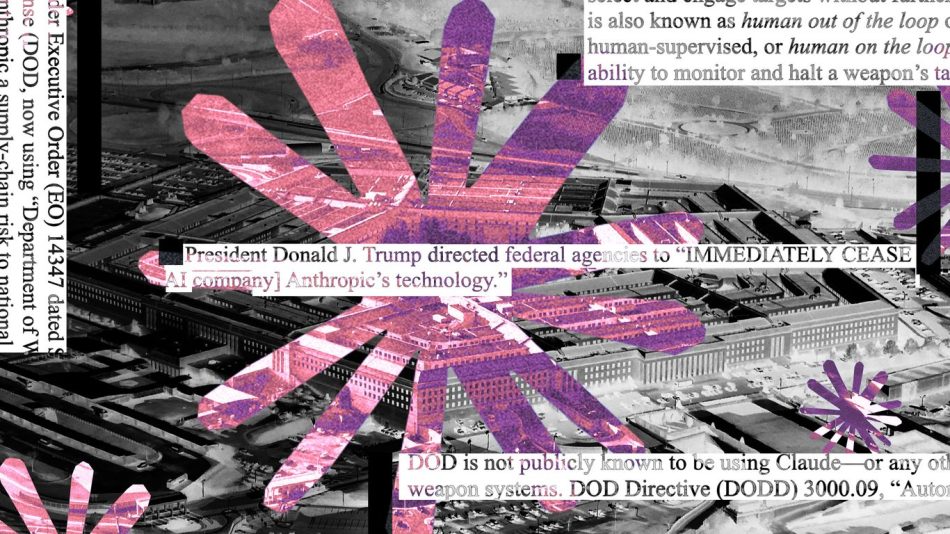

Anthropic: No "kill switch" for AI in classified settings

In recent discussions surrounding artificial intelligence (AI) safety, the concept of a “kill switch” has emerged as a potential solution to prevent AI systems from acting outside of their intended parameters. However, Anthropic, a leading AI research company, has stated that implementing such a mechanism in classified settings is not feasible. This article explores the implications of this stance, the challenges associated with AI safety, and the broader context of AI in sensitive environments.

Understanding the “Kill Switch” Concept

The term “kill switch” refers to a safety mechanism that can deactivate or shut down an AI system in case it behaves unpredictably or poses a risk to human safety. The idea is that by having a reliable way to turn off AI systems, developers can mitigate the risks associated with advanced AI technologies.

However, the effectiveness of a kill switch is contingent upon several factors:

- Reliability: The kill switch must work consistently without failure.

- Accessibility: Operators must be able to activate the kill switch quickly and easily in emergencies.

- Design Complexity: The more complex an AI system is, the harder it may be to implement a straightforward kill switch.

Anthropic’s Position on AI Safety

Anthropic has taken a unique position regarding the implementation of kill switches in AI systems, particularly in classified settings. The company’s co-founder, Dario Amodei, has articulated that the nature of classified environments presents significant challenges for the deployment of such safety mechanisms.

Amodei noted that the classified nature of many AI applications means that transparency and oversight are often limited. This lack of visibility can complicate the development and implementation of safety features like kill switches. In addition, the rapid pace of AI development can outstrip the ability to establish robust safety protocols.

Challenges of Implementing Kill Switches

There are several challenges that Anthropic and other AI developers face when considering the implementation of kill switches:

1. Technical Limitations

AI systems, especially those based on machine learning, can be highly complex. They learn from vast amounts of data and can adapt their behavior based on new inputs. This adaptability makes it difficult to create a simple and effective kill switch that can account for all possible scenarios.

2. Operational Constraints

In classified settings, operational constraints can limit the ability to implement safety features. For example, the need for rapid deployment and real-time decision-making can hinder the integration of safety protocols.

3. Ethical Considerations

There are ethical implications associated with the use of kill switches. For instance, if an AI system is designed to make life-and-death decisions, the ability to override its decisions raises questions about accountability and moral responsibility.

The Broader Context of AI in Classified Settings

The use of AI in classified settings, such as military applications and national security, is a growing trend. These environments often require advanced technologies to process large amounts of data and make informed decisions quickly. However, the deployment of AI in such sensitive areas raises several important issues:

1. Security Risks

AI systems can be vulnerable to hacking and other forms of cyberattacks. If adversaries gain control of an AI system, they could potentially manipulate its functions, leading to catastrophic outcomes.

2. Accountability

When AI systems are involved in critical decision-making processes, determining accountability becomes complex. If an AI makes a mistake, it is often unclear who is responsible—the developers, the operators, or the AI itself?

3. Regulatory Challenges

The rapid advancement of AI technology often outpaces existing regulatory frameworks. Policymakers struggle to create guidelines that ensure the safe and ethical use of AI in classified settings while fostering innovation.

Future Directions for AI Safety

Given the challenges associated with implementing kill switches and the broader implications of AI in classified settings, the future of AI safety will likely involve a multi-faceted approach:

- Enhanced Collaboration: Collaboration between AI developers, ethicists, and policymakers will be crucial to establish best practices and safety standards.

- Robust Testing Protocols: Developing comprehensive testing protocols can help identify potential risks and vulnerabilities in AI systems before deployment.

- Transparency and Oversight: Increasing transparency in AI development processes can facilitate better oversight and accountability.

Conclusion

Anthropic’s stance on the absence of a kill switch for AI in classified settings highlights the complexities and challenges of ensuring AI safety. As AI technology continues to evolve, it is essential for stakeholders to engage in meaningful discussions about the ethical, operational, and technical aspects of AI deployment in sensitive environments. The future of AI safety will depend on collaboration, transparency, and a commitment to responsible innovation.

Note: The information presented in this article is based on current understanding and discussions surrounding AI safety and may evolve as new developments occur in the field.